Explainable and Verifiable Learning

Creating explainable and verifiable autonomous agents

Last updated: February 24, 2026

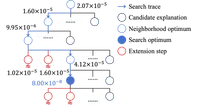

We work on methods to explain and verify the behaviors of autonomous agents, with a focus on those that use learning-based decision making policies. One way we do this is by inferring the objectives of a policy—in the form of a temporal logic specification—using neurosymbolic or heuristic search methods. We are also working on methods to create autonomous agents that are explainable-by-design, with an emphasis on integrating formal methods—like temporal logic—with hierarchical reinforcement learning.

We additionally work on algorithms for verifiable path-tracking, by combining reinforcement learning with contraction theory.

Relevant publications

This work was funded in part by ONR N00014-20-1-2249.